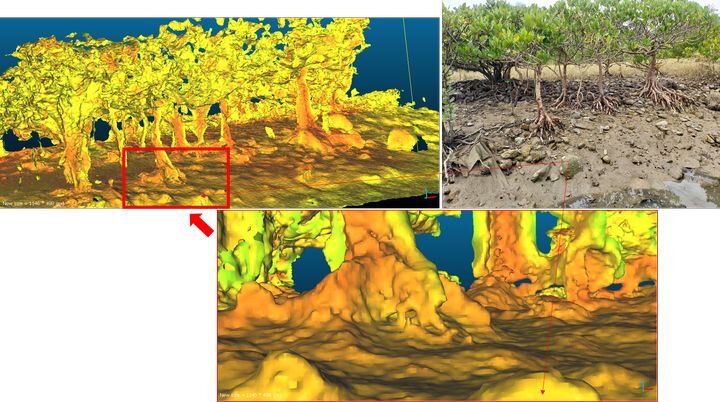

![Real time 3D comprehension [Source: 6D.ai]](https://fabbaloo.com/wp-content/uploads/2020/05/image-asset_img_5eb0a395a9862.jpg)

A reader comment got me thinking about the future of 3D printed repairs.

The other week we wrote a story about how a club in Argentina is consolidating efforts to effect 3D printed repairs to, in their case, toys. This is a very common scenario where household items break and the user is forced to pay for expensive replacement parts or service, often far beyond the actual cost of the parts.

If only one could simply 3D print the repair parts, things could be much simpler. Alas, that is often not possible because the proprietary 3D model of the broken part is held secretly by the originating company. And why wouldn’t they? They can charge good money for the repairs or parts.

Thus users are left with the dilemma of re-engineering the 3D model for the broken part, which is often well beyond the skills of the general public, in spite of efforts like the one in Argentina mentioned above.

But reader Ben Reytblat added a very intriguing moment. He says:

“I’ve long thought that this issue is ripe for an AI-driven solution. A plausible solution would include the skill set of a CAD designer and a Mechanical Engineer, but on a small scale and at a low level of complexity.

It looks like the CAD world is starting to wake up to this issue. For example, Autodesk is making Generative Design features available for early adopters. So…. Although this doesn’t address the entire problem, it’s a good step in the right direction.

In the future, I believe we will end up with software tools that can communicate with the user interactively, using natural language (perhaps via speech recognition) and 3D visualization, and will guide the user to the solution while taking care of the underlying minutiae. I would call such tools Ai Assisted Design (AAD), and the process itself Intent-Driven Design.”

This sounds right to me as well, although it is obviously very far into the future.

Or is it?

I saw a piece describing an interesting startup called San Francisco/Oxford-based 6D.ai, which might be part of that future solution. They say:

“We are tackling the first steps to making semantic 3D maps of the world on the hardware everyone already has in their pocket, so that you can create AR experiences that ‘just work’ in the physical world.”

While they are an augmented reality company, the fact is that they are essentially capturing 3D models of the world around them in real time – and with commonly available devices.

It might be possible in the future for such a tool to interact with advanced 3D CAD in the way that Reytblat describes. While it would not be the whole solution, it might be the “eyes” of a larger solution that involves a cloud-based CAD systems.

Just as a human engineer might fiddle with a broken part to derive its function, an AI system might be able to do the same if shown, in real time, different views of a part and its motion.

But again, this is so far away from today I can’t even.

Meanwhile, if you are interested, 6D.ai has opened up their system for public beta testing.

Via TechCrunch and 6D.ai