![Apple iPhone-generated 3D scan [Source: Fabbaloo]](https://fabbaloo.com/wp-content/uploads/2020/05/apple-scan-1_result_img_5eb09d45a5973.jpg)

A report suggests that upcoming Apple iPhone designs may include powerful 3D scanning capabilities.

According to a report on Bloomberg, it seems that Apple is expanding its 3D camera technology in a way that might enable some extremely interesting 3D scanning applications — not just applications the company is pursuing.

Let’s review what Apple does today.

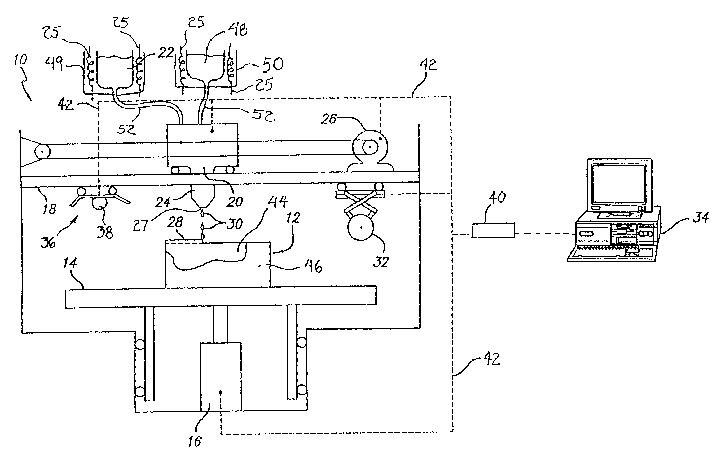

Since the introduction of the iPhone X, the front-facing camera on the device includes a 3D feature. The 3D scan takes place in a volume in front of the camera to a distance of about half a meter. The camera emits an array of invisible infrared beams. As they are reflected, the timing is recorded, to generate a 3D depth map of the scene.

Why so small a volume? Because the purpose of this 3D camera is to enable facial recognition. The 3D depth map is analyzed to determine a number of physical factors, like the distance between the eyes, for example, and those are matched against the iPhone owner’s information for authentication.

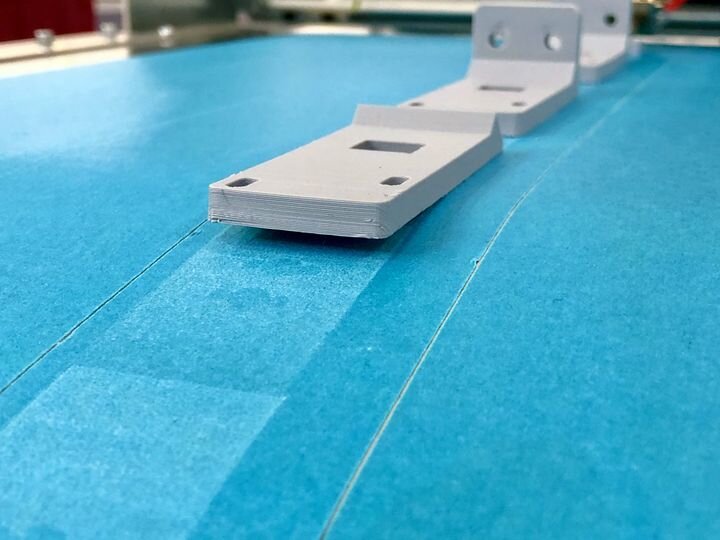

This was definitely not designed for 3D scanning, although some have actually used this hardware to do so, as we tested a few weeks ago. The “Capture” app actually worked reasonably well, but we found it to be extremely awkward to use because the 3D camera was on the wrong side of the phone. The Capture folks even contemplated building a kind of mirror attachment to help with this problem.

But now we hear that the new iPhones might have a 3D camera on BOTH sides. The rear-facing camera, the one that isn’t used to take selfies, is apparently getting a similar 3D capture capability, but with a difference.

It is to be laser powered instead of using infrared points. Apparently the infrared point approach does not work over longer distances, suggesting that the new camera would be specifically designed to capture much larger volumes.

Why would Apple want to do such a complex thing? It’s because of augmented reality. The idea is that you would be able to hold your phone up, and on the screen you’d see the real scene with added virtual elements. This is a powerful capability, as all manner of information could be provided. For example, you might see how your walls may be painted with a different color, or identify who is in the scene by name, or measure the size of the tires on that car, etc. And I can’t even imagine what kind of games might be possible.

That’s all fine, but I think there is another very powerful use to emerge from this hardware: 3D scanning.

If such hardware existed, then it would be a relatively straightforward project to build a full-on 3D scanning app — one that works from the correct side of the phone, too.

The scans would be of lower quality than one would obtain from proper industrial 3D scanners, but would certainly generate a ton of 3D models — and these could potentially be 3D printed.

Could we be seeing the emergence of a new driver for 3D printing? What if, say, three BILLION people are carrying these things around everyday, capturing 3D scans? Certainly some of them will want 3D prints, and even a slim percentage would be a gigantic boost to the 3D print industry.

We await announcements from Apple.

Via Bloomberg

No one seems to offer collaborative 3D printing modes on dual extrusion devices. We explain why this is the case.